The era of “chat wrappers” is completely dead. As of Q2 2026, the transition from basic text-generation LLMs to fully autonomous agentic code workflows is accelerating rapidly. However, a stark divide is emerging between teams trying to prompt their way to success and those engineering rigorous, deterministic architectural patterns.

This post dissects the mandatory systemic shifts required to build production-grade agentic tooling, specifically focusing on graph-based state management, stateless validation, and Schema-Driven development over natural language semantics.

1. Stop Asking, Start Telling: The Pydantic Mandate

The most common failure in legacy 2024-2025 AI systems was trusting the LLM’s natural language output directly. Modern agentic code strictly isolates reasoning boundaries.

[!WARNING] If your agent relies on parsing free-text responses via RegEx to understand if it succeeded, your pipeline is fundamentally broken.

Agents should only communicate via strict, enforced schema structures (like JSON locked to Pydantic models). The moment an output fails schema validation, the agentic node shouldn’t crash—it should automatically loop back, analyze the schema violation, and “repair” the structure without human intervention.

class AgentResponse(BaseModel):

action_type: Literal["WRITE_FILE", "EXECUTE_COMMAND", "ASK_USER"]

confidence_score: float

payload: Dict[str, Any]

@validator("confidence_score")

def must_be_high_confidence(cls, v):

if v < 0.8:

raise ValueError("Agent must have >80% confidence to execute without human review.")

return v

2. Graph Orchestration > Linear Prompting

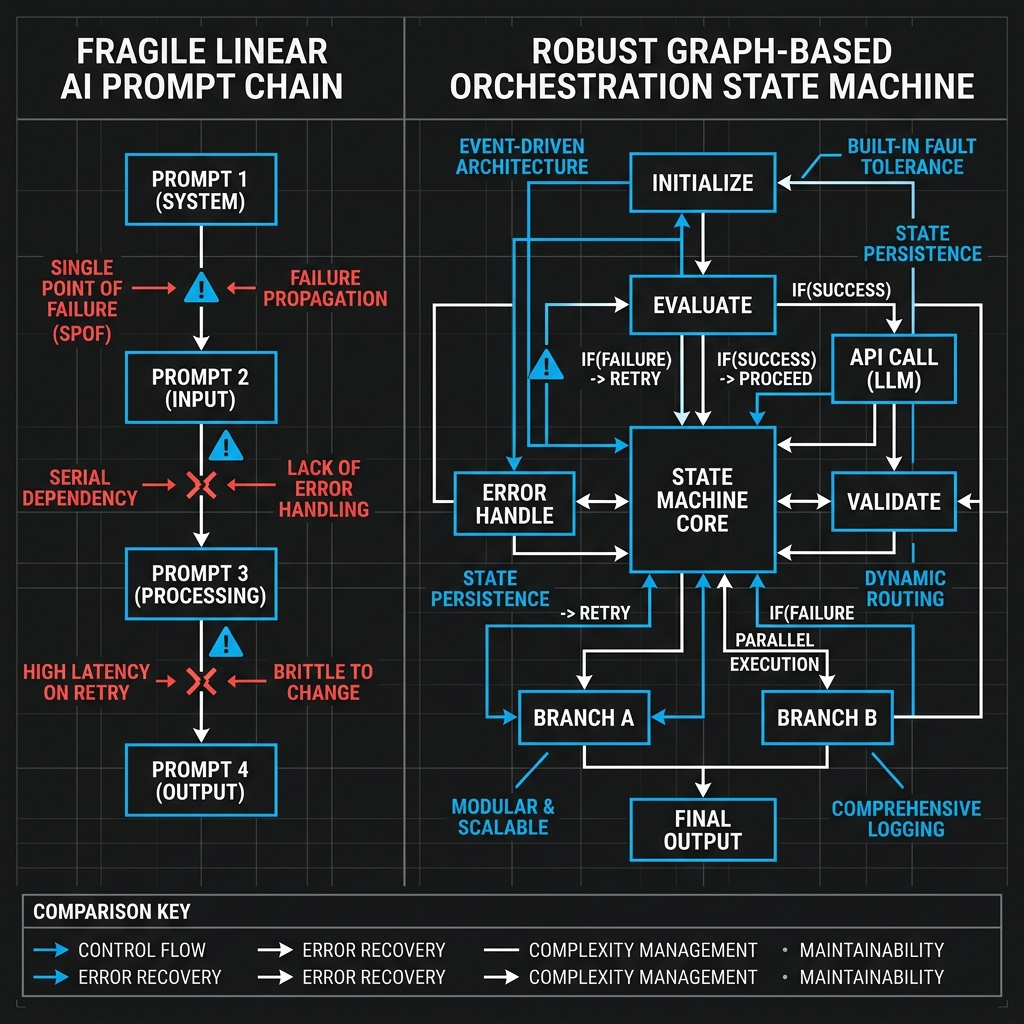

If you represent your agentic workflow as a straight chain (Prompt A → Prompt B → Output), you are building fragile infrastructure. Real engineering problems are not linear.

The industry has firmly transitioned to Graph-Based Orchestration (e.g., LangGraph architecture). By structuring subagents as distinct nodes on a state machine, the system can dynamically evaluate its position, fall back to “Error Handle” nodes, or trigger specialized “Critic Agents” before ever making a commit.

A visual mapping of linear fragility vs Graph-Based deterministic state machines.

A visual mapping of linear fragility vs Graph-Based deterministic state machines.

3. The “Produce → Critique → Repair” Loop

We do not let junior engineers commit code without review; we shouldn’t let agents do it either.

The architecture we rely on heavily in our internal tools incorporates distinct personas:

- The Generator Node: Receives the state and writes the code.

- The Critic Node: An entirely isolated agent instance (often running on a disparate model weight to avoid single-mode blindness) that reads the diff, runs static analysis, and attempts to formulate edge cases that break the Generator’s logic.

- Execution Sandbox: The code is compiled inside an ephemeral Lima VZ container. If the tests fail, the stdout is routed directly back into the Generator’s context window.

This loop guarantees that when an event finally breaks the repository boundary, it has already mathematically survived isolation testing.

[!TIP] Observability is critical. Log the reasoning traces (what the agent “thought” before execution) as diligently as you log API latencies. When a graph node loops infinitely, you need the trace telemetry to debug the prompt state.

Conclusion

Building an agentic coding environment is no longer an exercise in prompt engineering—it is an exercise in distributed systems design. By enforcing strict schemas, mapping logic via directed cyclical graphs, and isolating agents by duty, organizations can transform stochastic models into deterministic engineering machines.

Vitruvian Software Open Source

Vitruvian Software Open Source